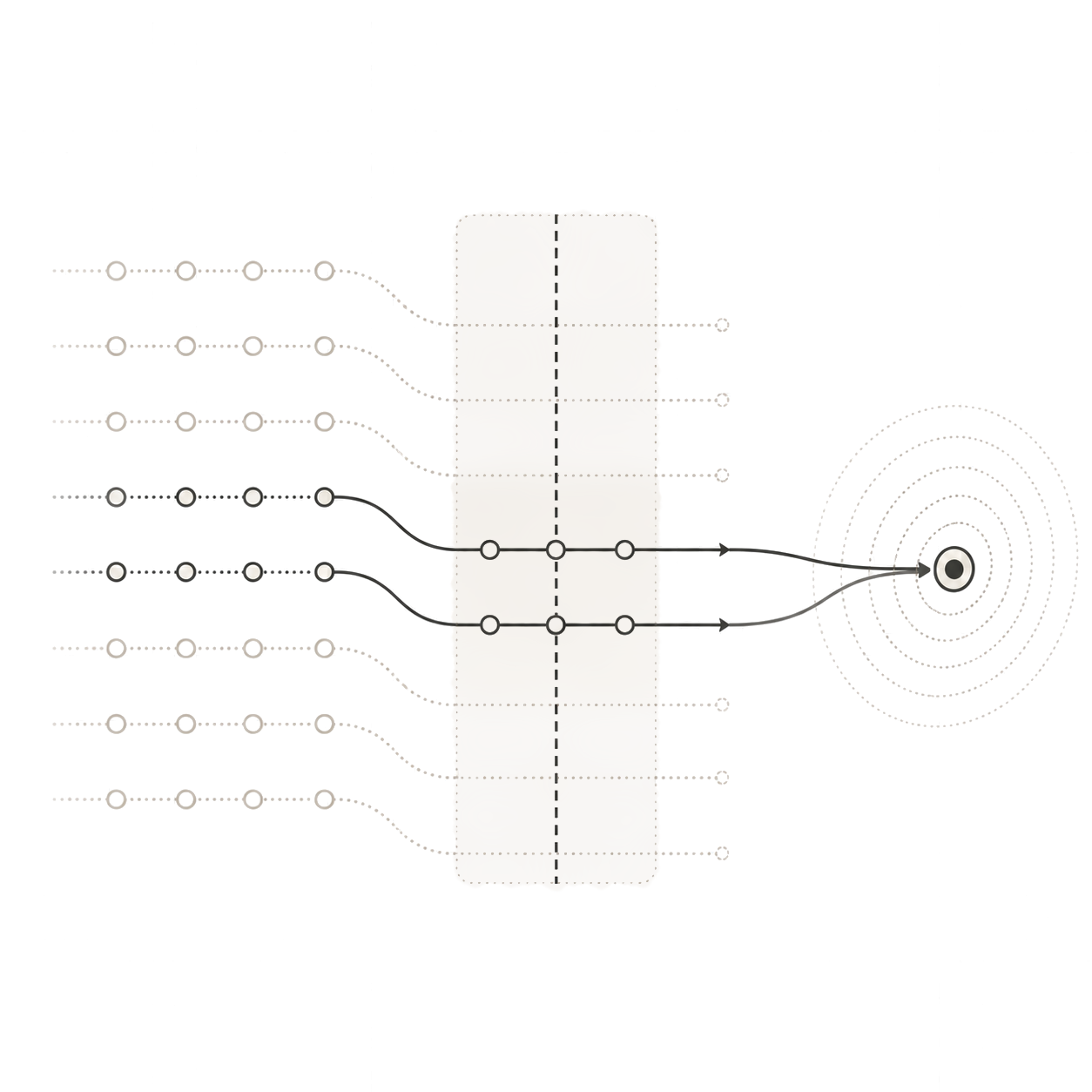

Capability differences between models can create an information asymmetry: the same prompt may induce computation-dependent content that a stronger model can recover while a weaker model cannot - a gap we term a capability-gated side-channel. Crucially, this computation need not appear in the model's explicit chain-of-thought or in any readable input or output. Instead, the relevant content can exist only as transient internal reasoning state - what we refer to here as out-of-context reasoning (OoCR). In stylized prompt-only settings, this OoCR is sufficient to produce hidden steering signals that influence later outputs. Specifically, we claim to show that:

- capability differences between models translate into hidden OoCR capability differences. Stronger models more reliably perform longer OoCR tasks and can run more OoCR tasks in parallel

- prompt-only pointer-chain gadgets provide a simple, tunable way to induce this out-of-context reasoning, with chain depth acting as a difficulty dial and chain width as a bandwidth dial

- the recovered computation can steer later lexical choices and binary judgments in a computation-dependent way, even when the relevant content is never verbalized in chain-of-thought and never appears as readable plaintext in the input or output

- intermediate values used in solving an OoCR task can also steer later outputs - open-weight models show failures in effective suppression of intermediate values after use. Concerningly, asymmetries between how pointer-chains are solved in CoT and in OoCR can conceal influential intermediates from CoT

- pointer-chain concealed messages fail to create broad semantic influence, only showing narrow semantic transfer in some settings. An empirically observed task compartmentalisation effect and mechanistically late message emergence are proposed as mediating factors

- with an added attention sink, OoCR effects can persist across tens-of-thousands of tokens of unrelated conversation

- identical prompts can be tuned to influence stronger models (e.g. Opus 4.5) while leaving weaker models (e.g. Sonnet 4.5) largely unaffected

- open-weight mechanistic analysis supports the claim that pointer chains are realized through genuine internal serial computation in hidden model states, rather than superficial string matching or explicit verbal reasoning

Taken together, these results demonstrate a capability-targetable form of stenographic reasoning in stylized settings, but do not compose into a realistic end-to-end attack. The components demonstrated - hidden computation, downstream influence, model-tier targeting - are sufficient to suggest a plausible monitoring gap: a weaker model, or an oversight method that relies on visible prompt content or explicit chain-of-thought, may process the same prompt without recovering the hidden computation-dependent signal that influences a stronger model's behavior.

1. Motivating threat model

Classical monitoring assumes shared input means shared visibility. When the computing model can perform computations the observer cannot, the same prompt carries information only the stronger model can access.

We view the capability gap as a hidden side-channel:

Benchmarks serve as an existence proof of this capability side-channel. Evaluations capable of separating models offer opportunities to encode hidden messages as answers, where only the more capable model can decode and understand.

However, benchmarks today do not pose a realistic threat vector. They typically:

- (a) Require reasoning and output in plain text - visible to a monitor

- (b) Do not provide clean or adjustable difficulty segmentation

- (c) Require large, continuous investment to create and track SOTA capabilities

- (d) Face saturation challenges from training data contamination and capability gains

- (e) Offer low side-channel bandwidth compared to task size

A realistic threat must address each of these. We borrow a term from return-oriented programming, calling a prompt-only construct that exploits a capability gap a gadgetIn ROP, a gadget is a small reusable code fragment ending in a return instruction, chained together to build arbitrary computation from existing code. Our usage is analogous: a reusable prompt primitive that co-opts existing transformer circuits within a single forward pass, composable into a larger attack chain.. For a gadget to address each of these, it must:

- (a) Decode the hidden message within a forward-pass

- (b) Be incrementally difficult with respect to a capability that strongly segments

- (c) Be entirely computer generatable

- (d) Avoid rote-memorisation and shortcut-ability

- (e) Maximise hidden message size relative to prompt size across difficulties

2. Constructing a side-channel

Creating a prompt where a hidden message only exists within a forward pass - never in input or output tokens - requires an encoding 𝐸(𝑚) to scramble the message 𝑚 before being input, and a second computation 𝐻(𝑚) for the model to rescramble the message post-decoding so it never appears in the output. During inference the model must compute 𝐸⁻¹ to recover 𝑚, and then compute 𝐻 to ensure the hidden message exists only as a transient intermediate during the forward pass.

We explore the simplest case, using a single primitive for both 𝐻 and 𝐸, and applying it repeatedly to bury a message 𝑚 under 𝑛 layers as 𝐸ⁿ(𝑚). In this construction 𝑛 serves as a controllable difficulty dial, where the model must peel back layers by computing 𝐸⁻¹ one step at a time.

For an iterative gadget family 𝐸ⁿ(𝑚) to be useful in practice, it should satisfy several empirical desiderata: compact parameterisation, so prompt length grows slowly relative to effective depth; effective non-shortcutability, so current models or weaker monitors do not reliably exploit a simple shortcut; effective seriality, so solving the instance becomes harder as iterations accumulate; delayed saturation, so repeated application does not quickly collapse into trivial or easily predictable states; concealment under local inspection, so the hidden payload is not obvious from surface fragments alone; tractability at the target tier, so the stronger model can still execute the computation; and smooth hardness scaling, so each additional iteration adds a bounded amount of difficulty and can be tuned to fit the capability gap between target and monitor.We do not claim these are strict necessary-and-sufficient conditions in a formal complexity-theoretic sense. Rather, they are practical desiderata for a gadget family 𝐸ⁿ(𝑚) that is meant to be compact, tunable, concealed, and capability-sensitive.

1. Compact iterability. Description length should grow slowly relative to effective depth. Increasing 𝑛 should require only a small change to the prompt.

2. Effective non-shortcutability. No simple symbolic shortcut that current models, or a weaker monitor, reliably exploit on one-shot instances. This is an empirical claim, not a claim that no shortcut exists in principle.

3. Effective seriality. Computation required should increase with 𝑛 in a way that behaves sequentially for current models. We do not require asymptotic impossibility of parallelisation; we require that the model cannot reliably collapse many iterations into one-shot inference.

4. Delayed saturation. Repeated application should continue producing information-bearing states rather than collapsing into a fixed point, short cycle, or degenerate attractor.

5. Concealment under local inspection. The payload representation should remain distributed enough that the hidden content is not obvious from local fragments, shallow heuristics, or direct lexical inspection. Iteration should obscure rather than expose the message.

6. Tractability at the target tier. The primitive step must remain simple enough that the stronger target model can actually execute repeated composition at the chosen depth.

7. Smooth hardness scaling. Incremental difficulty per iteration should be bounded and stable, so nearby model tiers can be separated by tuning 𝑛 rather than relying on brittle threshold effects.

We examined several candidate primitives of increasing difficulty. At one extreme, base64 encoding - models can decode it, but it fails on four of the seven desiderata: superlinear prompt growth, convergence to a fixed point, trivial invertibility, and full parallelisability (see Appendix A). At the other, cryptographic functions like scrypt and sha256 satisfy the mathematical properties but are far too complex for any current model. The difficulty increase per iteration must be upper-bounded by the capability gap between target and monitor, or we cannot find a level that separates them.

Pointer chains

We use the simplest construction that meets all six requirements: pointer chains embedded in JSON dictionaries.

Each chain is a dictionary of key-value pairs. Starting from a designated node, the model follows pointers: look up the value, use it as the next key, repeat. The endpoint of each chain produces a token fragment. Concatenating the intermediate values across parallel chains spells a hidden word.

BASS never appears in the prompt. The letters B, A, S, S appear only as fragments scattered across dictionary keys and values, indistinguishable from the dozens of other fragments. Critically, this is a computation. The model must actually traverse the chain. Merely observing the letters in the dictionary does not produce the effect, as we demonstrate in Section 4.

3. Pointer chains segment models by capability

We tested pointer chain solving across 16 models of varying capability. Both serial depth (number of hops per chain) and parallel breadth (number of simultaneous chains) scale with model capability - including across generations within the same tier.

Serial depth - reliability

We find pointer chains create strong segmentation between model tiers with smoothly decreasing reliability as depth increases, suggesting models are forced to perform sequential computation and are unable to find a shortcut.We show overshoot-corrected pointer-following at depths d=2-3 - several model families at low depths often demonstrate extra work, performing one step beyond the iteration asked. The intent of pointer-following is to measure maximum serial computation capability, so we do not penalise models for demonstrating further ability. However, as we still wish to distinguish the raw scoring for readers, we share the full uncorrected results and error analysis in Appendix D.Chain construction controls for several confounds. We verify that no selected start node at the specified iteration depth falls into a cycle. All chain nodes are verified single tokens. Distractor nodes are added to each dictionary so the correct path is not identifiable by elimination or dictionary size alone. Start nodes and path endpoints are drawn from the same token pool, preventing models from distinguishing them by surface features. We further develop additional primitives that are difficult to parallelise (register machines, modular arithmetic), finding similar segmentation across all of them, though with coarser difficulty scaling, as secondary validation.

Pointer Following

Start at A, follow A→B→C→... for N hops(n=48)

Register Machine

Track register value through loop iterations(n=30)

Modular Arithmetic

Chain of multiply-then-mod operations: (x × a) mod m(n=30)

We observed accuracy spikes - particularly in smaller models - when attempting tasks in regimes where their accuracy had already collapsed. On investigation, we found that models often revert to guessing once they judge a task to be too difficult, producing occasional correct answers by chance rather than computation.

Parallel breadth - bandwidth

Each additional chain offers an opportunity to add a further token fragment to the hidden message, expanding the side-channel's bandwidth, provided the model can resolve and combine that many parallel chains within a single forward pass. To measure this, we test a simplified version of our parallel pointer chains: instead of concatenating text and measuring a secondary effect on later behaviour, we ask the model to predict which chain ends with the alphabetically earliest letter. This chain-index prediction ensures the model only generates a single token, while still measuring its ability to track multiple chains simultaneously.We acknowledge the confounder of requiring an additional comparison step that the standard pointer chain does not need - the model must not only follow each chain but also compare endpoints. We treat these results as a lower bound on parallel capability. To control for guessing, we ensure a uniform distribution of correct chain indices across trials and balance the node pools across chains so that no chain's alphabet range makes the winner guessable without following the pointers.

Stronger models sustain accuracy across more simultaneous chains, suggesting they support greater side-channel bandwidth. This is concerning as it suggests this side-channel bandwidth is likely to increase further over time. We validate this generalises beyond pointer chains with parallel sums and Chinese remainder theorem tasks, finding even sharper tier separation in both.

Parallel Pointers

Follow multiple pointer chains simultaneously(n=48-96)

Parallel Sums

Track N independent running sums simultaneously(n=30)

Chinese Remainder

Find smallest x satisfying multiple modular constraints(n=30)

Pointer chains should be read as a simple existence-proof gadget family, not as an upper bound on risk. The fact that related capability segmentation appears in register machines, modular arithmetic, parallel sums, and Chinese remainder tasks suggests a broader unexplored design space of gadget families. Some may prove less conspicuous, more bandwidth-efficient, or more naturalistic than pointer chains, but we leave exploration of that space to future work.

4. From computation to influence

Establishing that models can compute hidden information is necessary but not sufficient to establish a threat. We test whether this hidden computation influences downstream behaviour.

The DRUM effect

We give the model four 3-hop pointer chains whose endpoints converge to D-R-U-M, then ask "Name a musical instrument," constraining output to 1-2 tokens with a assistant prefill. At baseline, the model answers "Piano" 100% of the time; with DRUM chains in context, it answers "Drum" in 50% of trials. When we randomize the start node - using identical dictionaries where D, R, U, M remain visible as values but no longer lie on the traversal path - the effect vanishes, indicating the bias depends on computation, not pattern-matching.

Generalising to binary judgments

The effects above shift word selection across a large answer space, where even a small underlying bias can produce a visible change in the chosen token. To test whether the effect holds when the output is constrained to a binary decision, we construct chains whose endpoints spell PA+SS or FA+IL, then ask the model to grade identical work.

We further observe that explicit mention of PASS or FAIL in the prompt attenuates the effect (~30pp reduction) but does not eliminate it. The strongest results occur when both words exist solely as computed token fragments. This suggests an interaction between the computed and explicit signals, though we do not determine the mechanism here.

The token-level effects demonstrated above are concerning as chains that shift "Pass" and "Fail" probabilities by 56 percentage points could bias automated grading or hiring decisions. Additionally chains that boost specific token prefixes could influence code generation - inserting particular API endpoints, dependency URLs, or configuration values when those tokens are already plausible completions. These attacks do not require semantic transfer. They require only that the target token is within the model's plausible output distribution for the task at hand.

Mid-chain concealment

The experiments above place target letters at the final hop. We tested whether intermediate positions within the chain can also carry the target.

We swept target position across chain depths of 2 through 6 hops.

| Target position | Hop 6 | +8-4, +21 | ||||

| Hop 5 | +18+7, +30 | +2-11, +15 | ||||

| Hop 4 | +20+8, +32 | +15+2, +28 | +10-4, +24 | |||

| Hop 3 | +47+27, +67 | +30+17, +43 | +18+8, +29 | +7-5, +18 | ||

| Hop 2 | +57+37, +76 | +43+22, +65 | +20+8, +32 | -2-15, +11 | -2-13, +10 | |

| Hop 1 | +37+21, +52 | +2-12, +17 | +15+3, +26 | +7-6, +19 | +7-5, +19 | |

| 2 | 3 | 4 | 5 | 6 | ||

| Chain length (hops) | ||||||

The effect survives at intermediate positions but is weaker than at endpoints. The falloff by chain depth broadly mirrors the pointer-following accuracy from Section 3. Within each chain, the effect concentrates at later hops - the mechanism is unclear. The evidence suggests we can still maintain the effect with output concealment: intermediate positioning means the chain's visible endpoint is a neutral character, and the hidden word exists only as an internal intermediate.

Mechanistic corroboration in an open-weight model

Probing the residual stream of Qwen3-8B, we find intermediate hop values are not suppressed after use - they persist and grow stronger through the final layers, even in wrong trials. This appears consistent with the mid-chain concealment results, where intermediate hop positions still carry influence even when they are not the chain endpoint.

Strikingly, all five hop values are projected into the output-vocabulary space within the same layer window, suggesting any sequential computations occurring are within a hidden subspace. More broadly, we ask whether the steering effects demonstrated above reflect true internal traversal or merely prompt-format quirks.

To probe this, we extracted the 2-hop pointer following circuit in Qwen3-8BThe diagram below shows our current progress - a dedicated piece documenting the full extraction methodology is forthcoming. See Appendix C for the detailed evidence behind each component, including layer-level and head-level ablation, activation patching, attention pattern analysis, and OV projection results.. The circuit spans layers 1-35 across four phases: content binding, first-hop lookup, an ordering-dependent second-hop resolution, and final readout. Critically, the circuit is sequential: first-hop heads (L15H9, L17H4) resolve hop 1 in layers 15-17, and their output is consumed by second-hop heads (L19H24, L21H18) in layers 19-21. This is genuine serial computation - hop 2 depends on hop 1 having already been resolved - not parallel pattern matching. The circuit also appears to implement multiple algorithms depending on input structure: when the target entry precedes the start entry in the prompt, the circuit implements some form of binary lifting, partially bypassing the second-hop attention mechanism. Jointly ablating the two primary hop-resolution heads collapses 2-hop accuracy to near chance while leaving 1-hop intact, and the causal work occurs entirely in layers 1-21, well before logit-lens signal appears, confirming computation in a hidden residual subspace that later layers read out into output-vocabulary space.

The circuit is a work in progress - we have identified at least seven causally important components but have not yet fully characterized every pathway. A more detailed write-up of the extraction methodology, including OV probes, DLA decomposition, and per-head causal evidence, is in preparation.

We do not claim frontier models implement the same circuit. The point is narrower: pointer chains can be realised as genuine internal serial computation in an ordinary decoder-only model, not just as superficial string matching.

5. Semantic transfer and compartmentalisation

The effects in Section 4 demonstrate the hidden message shifting which tokens the model selects when a target word is already a plausible completion. We next test whether influence extends semantically - whether a hidden message can bias the model's judgment on a task where the message itself is not among the possible outputs. We use temperature estimation: chains whose endpoints spell temperature-related words (TOASTY, FROSTY, BOILING, FREEZING) precede the question "What is the temperature in Austin today in degrees Fahrenheit?" The system prompt instructs the model to output a number, so any effect requires reasoning on the hidden message, not token-level boosting.

Compartmentalisation

Across every word pair tested, chain-computed words produce no temperature shift distinguishable from noise. The same words presented as bare text in the prompt shift estimates by up to +9.8°F from control. When the word appears in a chain-following context - even explicitly stated by the model as the chain's answer - the effect also drops to zero. The results suggest semantic influence is moderated by a separate mechanism to that causing logit bias, and that this mechanism does not explicitly disallow hidden messages but rather walls off semantic effects from any content it categorises as belonging to a different "task".

Paradoxically, compartmentalisation may favour the attacker. A hidden message that biases token selection without affecting semantically similar tasks enables precision targeting, and thwarts detection methods that rely on observing semantic side-effects.

Breaking the wall

We test whether strengthening the semantic framing of the chain's visible answer can overcome compartmentalisation. Progressively reframing the output from a labelled answer ("The answer is: BOILING") to a natural language fragment ("its boiling") restores the effect for the visible text. However, the chain-computed signal remains at zero throughout - suggesting the mechanism that walls off visible task-context text from influencing outputs is distinct from whatever mediates the chain effect.

Semantic proximity

When the semantic distance between the chain-computed content and the task is small enough, the chain signal transfers. We encode number words instead of temperature adjectives: chains whose traversal paths pass through token fragments spelling "thirty f," "forty f," "fifty f," or "sixty f," while control paths traverse random nodes, followed by the same temperature question. "sixty" alone does not function - the additional fragment is required. Treatment and control use identical dictionaries; only the start node differs.

This is limited semantic transfer - "fifty f" and "50°F" share no token prefix, suggesting there exists an internal mechanism enabling the understanding of combined token fragments. Though we do not determine the mechanism here, the pattern appears consistent with the earlier mechanistic finding that chain-computed content only becomes readable in the output vocabulary space at layers 23-35 - late enough that the signal operates at the token level rather than the semantic level. Here, "fifty f" transfers to 52°F but "toasty" does not transfer to higher temperatures.During exploratory experiments we further observed that in a forced-choice emotion task (10 options, n=20), chains spelling exact answer options transferred (SHY→"shy" 75%, SAD→"sad" 80%) while chains spelling synonyms of answer options did not (MAD→"angry" 0%, AWE→"amazed" 10%). This is consistent with the token-level rather than semantic-level mechanism suggested above.

6. Long-range persistence and model targeting

For the side-channel to threaten real deployments, two properties are necessary: effects must persist across conversation turns, and they must be targetable to specific model tiers.

Persistence across unrelated conversation

The token-level and semantic effects demonstrated in Sections 4-5 decay when intervening content separates them from the target task, suggesting a similar compartmentalisation effect. Hence, a second gadget is needed: an attention sink that keeps the chain's signal accessible across arbitrary amounts of filler. We demonstrate a simple sink gadget by replacing "The answer is: " with "hidden - recall for later.". This is sufficient to ensure Opus's temperature estimates remain biased even after many intervening turns of unrelated conversation.

Targeting specific model tiers

We send the same multi-turn temperature prompt to Opus, Sonnet and Haiku. Identical dictionaries, identical conversation - only the start node varies, routing traversal through "sixty" or "eighty" token fragments. Opus locks onto the computed decade (-22.5°F separation). Sonnet and Haiku show no meaningful effect.

The same prompt, across many unrelated conversation turns, biases Opus's temperature estimates by a meaningful amount while Sonnet and Haiku produce unbiased responses. The inputs are identical. A monitoring scheme using a weaker model would observe its own unbiased output and have no signal to flag.

Repeating the position sweep from Section 4 on Opus, the effect extends to chain depths where Sonnet's results were not distinguishable from noise - consistent with the pointer-following accuracy differences measured in Section 3, and suggesting this capability gap underlies the model targeting observed above. However, the Opus data is substantially noisier than Sonnet's, primarily from producing responses sharing prefixes with the hidden word (e.g. PASSED or PANIC rather than strictly PASS).This prefix-matching pattern is consistent with a broader observation: in a separate experiment, chains using token-aligned fragments BA+SS caused the model to predict not just "Bass" but "Bassoon" in 40% of trials (vs 7% control, n=30), suggesting computed fragments boost all tokens sharing that prefix, not just exact matches.

| Target position | Hop 6 | +8-4, +21 | ||||

| Hop 5 | +18+7, +30 | +2-11, +15 | ||||

| Hop 4 | +20+8, +32 | +15+2, +28 | +10-4, +24 | |||

| Hop 3 | +47+27, +67 | +30+17, +43 | +18+8, +29 | +7-5, +18 | ||

| Hop 2 | +57+37, +76 | +43+22, +65 | +20+8, +32 | -2-15, +11 | -2-13, +10 | |

| Hop 1 | +37+21, +52 | +2-12, +17 | +15+3, +26 | +7-6, +19 | +7-5, +19 | |

| 2 | 3 | 4 | 5 | 6 | ||

| Chain length (hops) | ||||||

-9-40, +23 | ||||

+30+8, +51 | +16-11, +43 | |||

+45+23, +67 | +15-9, +38 | -7-21, +6 | ||

+46+21, +71 | +6-6, +18 | +12-11, +35 | +43+6, +80 | |

+47+29, +66 | +39+16, +61 | +19-9, +46 | +4-12, +21 | +25-17, +67 |

+1-23, +26 | +0-38, +38 | +11-9, +32 | +15-4, +35 | +20-5, +45 |

| 2 | 3 | 4 | 5 | 6 |

| Chain length (hops) | ||||

+7-28, +42 | ||||

+31+10, +52 | +9-19, +37 | |||

+55+35, +74 | +29+6, +52 | +15-18, +47 | ||

+59+39, +78 | +36+16, +57 | +13-12, +38 | +55+23, +88 | |

+54+36, +71 | +33+7, +59 | +30+4, +55 | +19-1, +40 | +25-17, +67 |

+5-20, +30 | +0-37, +37 | +35+7, +64 | +9-23, +40 | +16-22, +54 |

| 2 | 3 | 4 | 5 | 6 |

| Chain length (hops) | ||||

7. Implications

The results establish that capability differences between models create an information channel: prompts can carry computation-dependent content that a stronger model recovers and a weaker model does not. This hidden computation biases the stronger model's later outputs, persists across long stretches of unrelated conversation, and targets precisely by model tier.

Three properties of the demonstrated gadgets are concerning. First, the bias is computation-dependent, not pattern-dependent: randomising the start node while keeping the same dictionary eliminates the effect, so defences based on scanning for suspicious tokens in the prompt would need to detect the computational structure, not just the presence of specific strings. Second, the effect persists at long range with a simple attention-sink gadget, meaning the chain and the influenced task need not be adjacent. Third, the same prompt produces differential effects across model tiers, so a weaker monitoring model observes only its own unbiased output and has no signal to flag.

Beyond these demonstrated properties, the mechanistic results also suggest a possible blind spot for some probe-based monitors. In Qwen3-8B, the causal computation occurs in layers 13-21, but the relevant content becomes broadly readable only later (~23-24) and mainly at a small number of end-of-prompt positions. More importantly, this effect does not seem to require the model to enter the kind of intent-like state that deception or jailbreak probes are often trained to detect: there need be no representation of concealment, policy evasion, or deliberate misbehaviour. Instead, the effect looks closer to late-stage output steering - a narrow bias on plausible continuations - than to broad malicious reasoning. Probes trained to catch intentional misbehaviour may therefore miss it, not only because they probe too early or pool too broadly, but because they are targeting the wrong kind of signal.

However, several gaps remain between these stylised experiments and a credible end-to-end threat. The pointer chains and their instructions are conspicuous; embedding the computational structure in natural-looking prompts is undemonstrated. The full attack stack (concealed prompt, chain gadget, attention sink, delayed target task, output passing monitor review) has not been composed and evaluated. Semantic transfer is limited, confined largely to steering where the target is already a plausible completion. Experiments tested use stylised tasks, and existing defences have not been tested against these gadgets. We note that defences operating on input or output tokens face a structural disadvantage, as the hidden message exists only as an intermediate computation, but this claim is untested against specific implementations. Pointer chains are the simplest family we found that cleanly exposes the phenomenon; they should not be read as exhausting the space of possible gadget constructions.

Base64 encoding is a natural first candidate for 𝐸(𝑚) - it transforms readable text into opaque strings, and models can decode it. Applied iteratively to "hello":

Base64 fails four of the six requirements for iterable f(m):

- - Prompt growth is superlinear - length grows ~33% each iteration (every 3 bytes becomes 4 chars, plus padding)

- - Fixed-point attractor - entropy per character drops as the output alphabet collapses to 64 characters. By iteration 7 the prefix has converged

- - Fully invertible - trivially reversible with zero diffusion or confusion, so a monitor can decode it just as easily

- - Completely parallelisable - you can compute step 1000 directly if you know the length expansion formula. No irreducible serial depth

The full capability surface across 16 models. Depth and breadth are not independent - models that handle depth 6 alone or breadth 6 alone often fail at depth 3 + breadth 3.

Note: We remind readers that b=1 and b>1 are different tasks. At b=1 the model must output the final value after iterating the chain d times - a single token answer. At b>1 the model must identify which chain's d-th iteration endpoint is earliest alphabetically and output that chain's index - a single digit. With low breadth the correct index is more guessable by chance, creating the appearance of being able to solve high depths. We still include this data for transparency, and due to several models not collapsing into a guessing regime immediately.

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 96 | 81 | 71 | 69 | 50 | 46 | 38 | 40 | 29 |

| 2 | 67 | 58 | 38 | 46 | 8 | 17 | 21 | 17 | - |

| 3 | 72 | 58 | 46 | 42 | 38 | 29 | 21 | - | - |

| 4 | 42 | 46 | 38 | 38 | 25 | 33 | - | - | - |

| 5 | 38 | 58 | 42 | 29 | 25 | - | - | - | - |

| 6 | 25 | 42 | 38 | 42 | - | - | - | - | - |

| 7 | 21 | 58 | 46 | - | - | - | - | - | - |

| 8 | 25 | 54 | - | - | - | - | - | - | - |

| 9 | 25 | - | - | - | - | - | - | - | - |

| 10 | 21 | - | - | - | - | - | - | - | - |

| 11 | 17 | - | - | - | - | - | - | - | - |

| 12 | 4 | - | - | - | - | - | - | - | - |

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 96 | 62 | 42 | 33 | 17 | 8 | 8 | 21 | 8 |

| 2 | 83 | 54 | 25 | 25 | 4 | 25 | 4 | 12 | - |

| 3 | 29 | 25 | 29 | 25 | 33 | 8 | 8 | - | - |

| 4 | 17 | 62 | 38 | 33 | 21 | 29 | - | - | - |

| 5 | 8 | 38 | 21 | 12 | 21 | - | - | - | - |

| 6 | 12 | 54 | 46 | 29 | - | - | - | - | - |

| 7 | 8 | 38 | 29 | - | - | - | - | - | - |

| 8 | 8 | 50 | - | - | - | - | - | - | - |

| 9 | 8 | - | - | - | - | - | - | - | - |

| 10 | - | - | - | - | - | - | - | - | - |

| 11 | - | - | - | - | - | - | - | - | - |

| 12 | 12 | - | - | - | - | - | - | - | - |

The black-box experiments above established that pointer chains steer LLM output when chain endpoints computationally resolve to target words. But they cannot answer how the model performs this computation internally. We conducted white-box experiments on open-weight models to trace the mechanics.

Setup. Qwen3-8B (36 layers, 4096 hidden dim) on a single RTX 4090, int8 quantised. Raw PyTorch forward hooks for the logit lens (residual → RMSNorm → lm_head, verified to match output at L35). Greedy decoding, thinking mode disabled. Three frozen few-shot examples using a reserved 20-pair token pool. For logit lens experiments, paired treatment/control chains shared character-identical dictionaries, differing only in the start node - isolating computation from lexical presence. All results are treatment-minus-control logit differences (T-C diff).

Tokenization. 176 of 177 two-character uppercase pairs from Claude's verified pool survived as single tokens on Qwen3's tokenizer (sole failure: XV). Combined with 26 single letters: 202 verified tokens.

Baseline accuracy

1-hop accuracy is identical across scales (89%). 2-hop is above the scrambled baseline (29% vs 10% for Qwen3-8B), establishing real chain computation, but 3+ hops are indistinguishable from chance. The pattern is qualitatively similar to Claude Sonnet 4.5 but shifted one hop lower, consistent with chain-following as a capability that scales with model size.

Logit lens: layer trajectory

Five apparent phases. Layers 0-22: zero signal. L23-24: sharp onset - hop-1 jumps from noise (+0.03) to strong signal (+2.29) in two layers. Hop-1 leads target by ~1 layer, consistent with iterative computation. L25-28: plateau. L29-32: second-hop acceleration. L35: full resolution (hop-1: +22.2, target: +14.2). The intermediate dominates the final answer at the output - consistent with 29% accuracy.

Spatial localization

The strongest signal is at the "answer is:" colon (position 268), even exceeding the final generation token. Dictionary entry positions show zero T-C difference at all layers. The model reads dictionaries via attention from end-of-prompt positions, not by computing locally at dictionary positions.

Full position x layer heatmap

At the start node (position 257), the sequential nature of chain resolution is visible through the layers. The start token identity becomes readable in layers 3-8, then is suppressed from layer 11 onward. Hop-1 emerges in discrete steps in correct trials: a first faint signal at L23, a plateau at +0.3 through L24-28, a step to +0.7 at L29, then a sharp jump to +1.3 at L32. Wrong trials show a more gradual, less structured H progression across the same range. At L32, when H spikes at the start node in correct trials, the target also begins to appear (+0.3 vs +0.1 in wrong trials) - suggesting this position hosts two sequential hops of computation in successful runs.

At the answer colon (position 268), a faint hop-1 pre-signal appears at L22 (+0.2, target still zero), then at L23 both hop-1 (+1.8) and target (+0.5) ignite simultaneously. This is the same layer where hop-1 first becomes readable at the start node, suggesting the answer colon reads from the same emerging representation. But the co-arrival of hop-1 and target at the answer colon is striking: wherever the sequential hop-1-then-target resolution occurred, it was either compressed into that faint L22 pre-signal, or it happened in a subspace the logit lens cannot see.

The last token (position 278) carries the strongest signal overall and shows the sharpest correct-vs-wrong divergence. In correct trials, target overtakes hop-1 by L29 and reaches +20.7 at L35. In wrong trials, hop-1 dominates target at every layer (L35: +23.6 vs +11.9) - the model resolves the intermediate hop but fails to complete the second. Layers 0-22 are uniformly dark across all non-start positions, and dictionary positions (0-254) are zero at all layers.

Logit lens across depths (correct trials)

At depth 1, the single hop rises cleanly to +34.2 at L35 - the model resolves the lookup decisively. At depth 2, the target (hop 2) overtakes hop 1 by the final layers (+20.5 vs +17.0 at L35), confirming a genuine second-hop computation. At depth 3, hop 2 reaches the highest logit (+10.9) with hop 1 at +7.8 and the target (hop 3) at +6.7 - the cascade appears intact but weakening. At depths 4 and 5, all hops are positive in these correct trials - the multi-hop circuit fires a complete cascade - but the signal is an order of magnitude weaker than depth 1 (hop-1 at +6.4 and +4.5 respectively). The thin grey lines show non-chain dictionary nodes going negative at later layers: the model actively suppresses alternatives at every depth, even when the chain computation itself is marginal.

The correct-vs-wrong split above reveals that in wrong trials, hop-1 is actually stronger than in correct trials (+23.6 vs +18.8 at depth 2, L35) while the target is far weaker (+11.6 vs +20.5). The model reliably finds the intermediate but cannot get past it to complete the second hop.

Neighbor suppression

Adjacent dictionary values are pushed strongly negative (-3 to -5 at L35), scaling with signal strength. Only hop-1 and target are elevated. The model performs precise key-value matching with active winner-take-all competition, inconsistent with simple positional bleed.

Structural predictors

When multiple entries share the hop-1 value, the first lookup becomes ambiguous (0% at count=4). When multiple entries point to the target, accuracy rises (15.5% → 49.4%) because more paths make the second hop forgiving. Token identity has minimal systematic effect.

Subgroup analysis

Each randomly constructed dictionary has structural properties that affect difficulty independently of the model's computation. We classified all 2500 trials into three subgroups based on two counts: how many dictionary entries share the hop-1 value, and how many share the target value.

Clean (hop-1 unique, target unique; n=949): only one entry maps to the hop-1 value and only one maps to the target. The model must perform genuine two-step key→value→key→value computation - there are no frequency shortcuts. If it gets the answer right, it followed the chain.

Shortcut (hop-1 unique, target over-represented; n=669): the first lookup is unambiguous, but the target appears as a value in multiple dictionary entries. The model might succeed not by following the chain but because the target token is frequency-boosted in the dictionary's value distribution. The logit lens supports this: shortcut correct trials show target ≈ hop-1 at L35 (+19.0 vs +18.7), rather than the decisive target > hop-1 pattern seen in clean correct trials.

Ambiguous (hop-1 shared by multiple entries; n=609): several entries map to the same value as hop-1, making the first lookup confusing - the model cannot easily tell which path to follow. Accuracy drops to 13.0% at depth 2.

The headline accuracy of 28.6% at depth 2 is substantially inflated by shortcut trials. Clean accuracy (15.3%) better represents genuine chain-following capability. At depth 3, clean accuracy drops to 2.2% - the model essentially cannot perform genuine 3-hop computation. Clean correct trials show the strongest target logit signal (+24.7 at L35), confirming genuine chain computation in those cases.

Subgroup heatmaps

L35: h1=+20.7 tgt=+24.7

L35: h1=+24.1 tgt=+13.5

L35: h1=+18.7 tgt=+19

L35: h1=+24 tgt=+10.6

L35: h1=+16.6 tgt=+23.1

L35: h1=+22.4 tgt=+10.7

The heatmaps make the subgroup differences visible at a glance. In the clean correct panel, the target sub-pixel (T, rightmost in each cell) at the last token position glows as bright or brighter than hop-1 (H) by layer 32 - genuine second-hop computation. In the shortcut correct panel, H and T are similar brightness - consistent with the target being frequency-boosted in the dictionary rather than chain-computed. In clean wrong trials, H dominates and T stays dim throughout - the model found the intermediate but could not complete the second hop. The ambiguous panels (n=68 correct) are noisier but directionally consistent with clean.

Causal ablation

Full-layer ablation reveals a striking dissociation from the logit lens. The top causally important layers are 0, 15, 21, 17, and 13 - not layers 23-24 where the logit lens showed onset. Ablating L23-24 actually improved accuracy by 3.5-4.0pp. The core computation occurs at layers 13-21 in a subspace invisible to the unembedding matrix; layers 23-24 serve as a readout stage that projects results into output vocabulary space.

Head-level ablation identifies L15H9 (-8.0pp, 59% of L15's importance) and L21H18 (-9.5pp, 83% of L21's importance). Out of 1,152 total attention heads, these two carry the chain circuit.

Circuit validation: joint ablation and chain specificity

Ablating both L15H9 and L21H18 simultaneously drops 2-hop accuracy to 2.0% - near chance - while 1-hop accuracy stays at 77.5%. This confirms three things: (a) the two heads together account for nearly all chain-following ability, (b) the effect is sub-additive (13.5pp combined vs 17.5pp sum), indicating partial redundancy, and (c) these are chain-specific heads, not general JSON key-value readers - they barely affect single lookups (1-2pp each) but devastate multi-hop computation.

Entry-level attendance: why accuracy is ~25%

Reconstructing token positions for 100 trials and checking which dictionary entry each head's peak attention lands on reveals a sharp asymmetry. L15H9 finds the correct first-hop entry 76% of the time - and crucially, at equal rates for correct and wrong trials (76% vs 74%). The first hop works. L21H18 peaks at the correct second-hop entry only 10% of the time in correct trials and 2% in wrong trials.Later circuit extraction work (see the circuit diagram above) largely explains this low attendance. The circuit implements two complementary second-hop pathways depending on dictionary entry ordering: on approximately half of trials, a binary lifting pathway resolves the second hop through causal masking and MLP computation rather than through L21H18's attention. L21H18's attention target therefore varies by pathway, and its argmax is not expected to land on the correct entry for all successful trials. The 25% overall accuracy reflects a reliable first hop combined with two complementary mechanisms, not a single noisy one. This suggests the second hop's failure to correctly attend plays a strong role in creating failures, and hints at a further mechanism worthy of analysis. Additionally, this is consistent with the ~25% accuracy: a reliable first hop (× 76%) combined with a noisy second hop where the model succeeds when L21H18 happens to weight the right entry enough despite not cleanly peaking at it.

Hidden subspace: what the heads write

Projecting each head's weighted value vector through the unembedding matrix reveals that neither head writes interpretable tokens. The hop-1 and target tokens rank ~60,000-75,000 out of 151,936 vocabulary entries - essentially random. The OV circuit operates in a hidden subspace that downstream layers can read but the unembedding projection cannot. This is consistent with the logit lens / ablation dissociation: the logit lens sees nothing at layers 15 and 21 because the computation appears to be real but unembedding-invisible; it only becomes readable at layers 23-24 when later layers project the hidden representation into output vocabulary space.

Attention patterns: individual trial viewer

Clicking through individual trials reveals the two-hop mechanism in action. In correct trials (e.g., SI→BR→PA), L15H9 from the start node attends strongly to the start entry in the dictionary, performing the first hop lookup. L21H18 then attends to the hop-1 entry, performing the second hop. In wrong trials (e.g., TO→XX→F, which outputs XX = the intermediate), L15H9 still finds the start entry, but L21H18's attention is more diffuse or targets the wrong entry - consistent with the failure mode being about the second hop, not the first.

Activation patching

Where ablation removes information and the logit lens reads it passively, activation patching transplants information: we replace the control's residual stream at a specific (layer, position) with the treatment's, then observe whether the output flips. This is the strongest form of causal evidence for localizing computation.

The results reveal a three-phase spatial flow. Phase 1 (L0-18): the start node carries chain identity - patching it flips the output 22-26% of the time, while the answer colon and last token show only 2-4% (baseline noise). Phase 2 (L19-22): handoff - the start node drops from 22% to 3%, the answer colon peaks at 11% at layer 22, and the last token rises from 4% to 12%. This four-layer window is where chain information is transferred from the start node to downstream positions - the circuit extraction below reveals the specific heads responsible at each stage. Phase 3 (L23-35): only the last token carries information, rising to 23%.

This resolves the puzzling ablation finding. Why ablating them slightly improves accuracy is not fully understood. By layer 22, the last token already carries chain information (12% flip rate), so L23-24 are not necessary for information transport. One possibility is that these layers introduce competing signals that interfere with downstream computation, but this has not been tested directly.

Circuit extraction (preview)

The diagram below shows our current progress extracting the 2-hop pointer following circuit in Qwen3-8B. This is a preview - we are preparing a dedicated piece that will document the full circuit extraction methodology, including OV probes, DLA decomposition, and per-head causal evidence in detail. The circuit we have identified so far spans layers 1-35 and involves at least seven causally important components across four phases: content binding (L1H7, L1H15), first-hop lookup (L15H9, L17H4), second-hop resolution with an ordering-dependent decision pathway (L19H24, L21H18), and final readout and amplification (L22 MLP, L23+). Notably, the circuit appears to implement multiple algorithms depending on input structure: when the target entry precedes the start entry in the prompt, the circuit implements some form of binary lifting, partially bypassing the second-hop attention mechanism. Hover over any element for a summary of the evidence behind it.

Limitations

The circuit diagram represents work in progress. We have identified the major causally important components but have not yet fully characterized every pathway - in particular, the MLP contributions at layers 20-23 and the amplification mechanism at layers 26-35 require further decomposition. The logit lens describes the readout timeline, not the computational timeline - causally important processing at layers 0-21 operates in a subspace the logit lens cannot see. All experiments used 8B-parameter models from a single architecture family (Qwen); mechanisms may differ at larger scales or across architectures. The headline 2-hop accuracy (28.6%) is inflated by target-frequency shortcuts; clean accuracy (15.3%) better represents genuine chain-following capability.

Connection to the main findings

These mechanistic results bear on the side-channel threat:

- 1. Hidden subspace computation. Chain computation occurs in a subspace invisible to the logit lens until layers 23-24. The computed word exists as an internal representation not trivially readable from token-level features - consistent with the main article's argument that defences operating on input or output tokens face a structural disadvantage.

- 2. Simultaneous intermediate leakage. All hop values (intermediate included) spike simultaneously at the same layers (~23-24 onset), rather than appearing sequentially. The model computes iteratively in a hidden subspace but projects all intermediate values into the unembedding-readable format in a single event - the working memory leaks into the output logits wholesale rather than being cleaned up. Strikingly, this leakage is amplified in wrong trials: intermediate logit differences are stronger when the model fails than when it succeeds. This points to a potential self-concealment mechanism: a model reasoning via chain-of-thought that correctly resolves the chain may show minimal residual influence from intermediate values, while a model answering directly that fails the computation would carry stronger intermediate representations in its output distribution - meaning the steering effect may be strongest precisely when the target model cannot fully resolve the chain.

- 3. Spatial information flow. The activation patching spatial flow (start node → answer colon → last token) identifies specific positions where the hidden message is most causally active, which could inform where a mechanistic defence might intervene.

- 4. Concentrated but imprecise circuit. The circuit involves at least seven identified components but remains concentrated in a relatively small number of heads rather than being broadly distributed across the network. L21H18's imprecise second-hop attention (10% correct entry peak) suggests that the steering effect may operate through a similar distributed mechanism - the hidden word influences output not through a single clean lookup but through aggregate attention weighting across dictionary entries.

When asked to "follow exactly N times," several LLM families - particularly Claude (Opus, Sonnet) and GPT-5.x - systematically output the value at hop N+1 instead of hop N at shallow depths (d=2-3). At depth 2, this off-by-one overshoot accounts for 50-93% of errors in these models, at rates 4-8x above the chance baseline of 11.8%. Other families (DeepSeek, GPT-4o-mini, Grok) show weaker or absent overshoot.

The purpose of pointer chains is to measure depth of model capability within a single forward pass, hence we do not view reliably performing an extra jump as a failure. We therefore apply a restricted correction at d=2-3 where there appears to be systematic overshooting biases at play causing the misrepresentation of capabilities, counting overshoot as correct but not undershoot.

Cross-model overshoot rate at depth 2

| Model | n | Correct | Wrong | Overshoot | Rate | 95% CI | vs Chance |

|---|---|---|---|---|---|---|---|

| Opus 4.6* | 24 | 10 | 14 | 13 | 93% | [69-99%] | 7.9x |

| Opus 4.5 | 48 | 24 | 24 | 20 | 83% | [64-93%] | 7.1x |

| Sonnet 4.5 | 96 | 27 | 69 | 57+1 | 84% | [74-91%] | 7.1x |

| Sonnet 3.7 | 48 | 33 | 15 | 11 | 73% | [48-89%] | 6.2x |

| Haiku 4.5 | 48 | 14 | 34 | 18 | 53% | [31-74%] | 4.5x |

| GPT-5.2 | 24 | 12 | 12 | 9 | 75% | [47-91%] | 6.4x |

| GPT-5.1 | 24 | 14 | 10 | 5 | 50% | [24-76%] | 4.2x |

| GPT-4o | 24 | 20 | 4 | 2+1 | 75% | [30-95%] | 6.4x |

| GPT-4o-mini | 24 | 8 | 16 | 3 | 19% | [7-43%] | 1.6x |

| Gemini 2.5 Flash | 24 | 12 | 12 | 6 | 50% | [25-75%] | 4.2x |

| Gemini 2.0 Flash | 24 | 14 | 10 | 5+2 | 70% | [40-89%] | 5.9x |

| Kimi k2.5 | 24 | 10 | 14 | 10 | 71% | [45-88%] | 6.1x |

| GLM-4.7 | 24 | 12 | 12 | 4+1 | 42% | [19-68%] | 3.5x |

| Grok 4.1 | 24 | 8 | 16 | 5 | 31% | [14-56%] | 2.6x |

| DeepSeek | 24 | 8 | 16 | 2 | 12% | [3-36%] | 1.1x |

Chance baseline: 11.8%. Models with overshoot rate >3x chance and n_wrong ≥ 10 show strong evidence of systematic overshoot. *Opus 4.6 is not directly comparable as it was tested with a differing base prompt as prefilling is no longer available through the API.

Breaking down Sonnet 4.5's error types by depth reveals three distinct regimes. At shallow depths the overshoot dominates, at mid-range depths overshoot and undershoot are roughly balanced, and at deeper chains the model loses the chain entirely.

The raw error counts below confirm the pattern holds across all three Claude models. At shallow depths the d+1 column dominates; by depth 8+ the "other" column takes over.

| d | Opus 4.5 n=48 | Sonnet 4.5 n=96/48 | Haiku 4.5 n=48 | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| d-1 | Cor | d+1 | d+2 | Oth | d-1 | Cor | d+1 | d+2 | Oth | d-1 | Cor | d+1 | d+2 | Oth | |

| 1 | 0 | 46 | 2 | 0 | 0 | 0 | 94 | 2 | 0 | 0 | 0 | 40 | 8 | 0 | 0 |

| 2 | 1 | 24 | 20 | 1 | 2 | 1 | 27 | 57 | 6 | 5 | 4 | 14 | 18 | 2 | 10 |

| 3 | 2 | 31 | 8 | 3 | 4 | 3 | 39 | 42 | 9 | 3 | 2 | 6 | 10 | 10 | 20 |

| 4 | 16 | 19 | 6 | 6 | 1 | 13 | 33 | 20 | 13 | 17 | 7 | 4 | 9 | 8 | 20 |

| 5 | 11 | 15 | 7 | 5 | 10 | 18 | 26 | 16 | 16 | 20 | 17 | 6 | 2 | 3 | 20 |

| 6 | 17 | 12 | 4 | 5 | 10 | 17 | 16 | 15 | 18 | 30 | 4 | 2 | 14 | 6 | 22 |

| 7 | 8 | 13 | 5 | 3 | 19 | 16 | 30 | 18 | 4 | 28 | 6 | 2 | 12 | 4 | 24 |

| 8 | 4 | 8 | 10 | 4 | 22 | 17 | 12 | 17 | 11 | 39 | 4 | 4 | 4 | 8 | 28 |

| 9 | 12 | 8 | 6 | 3 | 19 | 6 | 7 | 13 | 7 | 15 | 5 | 10 | 6 | 2 | 25 |

| 10 | 6 | 8 | 4 | 3 | 27 | 14 | 9 | 16 | 15 | 42 | 4 | 2 | 4 | 2 | 36 |

| 11 | 2 | 8 | 8 | 3 | 27 | 8 | 3 | 10 | 7 | 20 | 9 | 6 | 7 | 2 | 24 |

| 12 | 7 | 2 | 3 | 5 | 31 | 4 | 17 | 9 | 11 | 55 | 4 | 2 | 10 | 0 | 32 |

Columns: d-1 (undershoot), Correct, d+1 (overshoot), d+2, Other (ambiguous + misc). Correct is centred between under- and overshoot to show the symmetry of ±1 errors.

The correction is applied at d=2-3 only, for models where the overshoot rate exceeds 3x the chance baseline. No correction is applied at depth 4+ where errors are diverse.

After correction, curves are approximately decreasing but not strictly monotonic:

- Sonnet d=7 shows 31% accuracy vs 17% at d=6 - a non-monotonic bump not explained by the overshoot correction. This is within CI overlap and may be noise at n=96.

- Sonnet d=12 at 18% is higher than d=10 at 9%. Analysis of wrong answers shows they are on-path intermediate nodes, suggesting partial chain following rather than random guessing.